The world is actively using AI to make our lives more efficient and safe — from creative writing to safer autonomous vehicles to drug discovery.

Underneath all this is a common denominator: “code”. We use code to train and build AI models as well as build harnesses and tooling that augment raw models into useful applications. The earliest AI tooling was handwritten, but now AI can self generate more code at an unprecedented speed and scale, unmatched by humans. Platforms are struggling to meet the AI scale requirements and Github forecasted a 10x jump to 14 billion commits in 2026. The barrier to building an application has never been lower, but it comes with hidden cleanup costs in the long run.

Who is writing AI-generated code, who is using it, and what is the cleanup cost?

The core set of users behind AI-generated code should fit into a handful of archetypes:

- The Inventors: these are the people and companies behind the core AI concepts, large language models (LLMs), and standards like MCP including OpenAI, Anthropic, and Google.

- The Researchers: academic labs, independent research groups, and benchmark creators who generate the long tail of ideas, talent, and evaluation methods the field runs on.

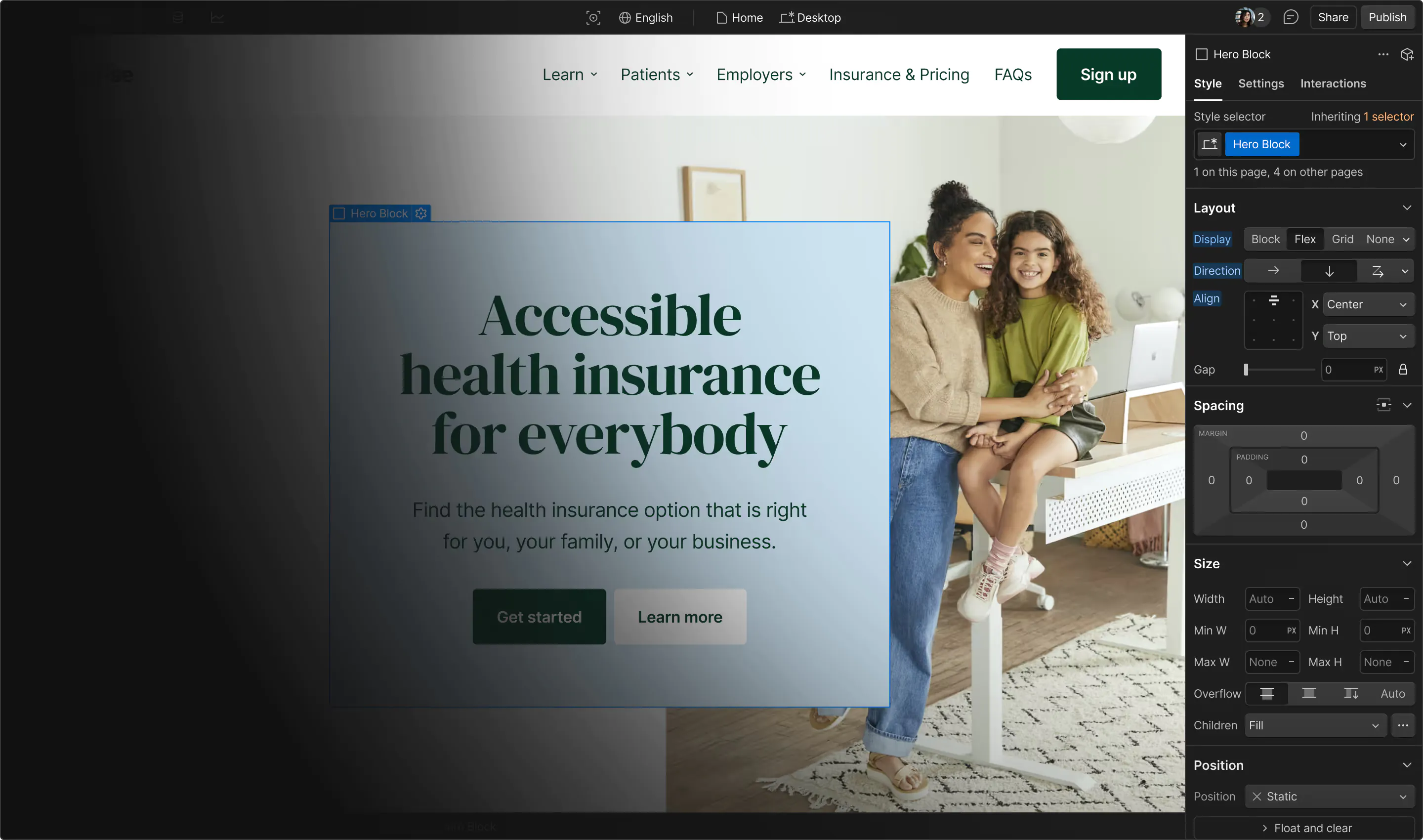

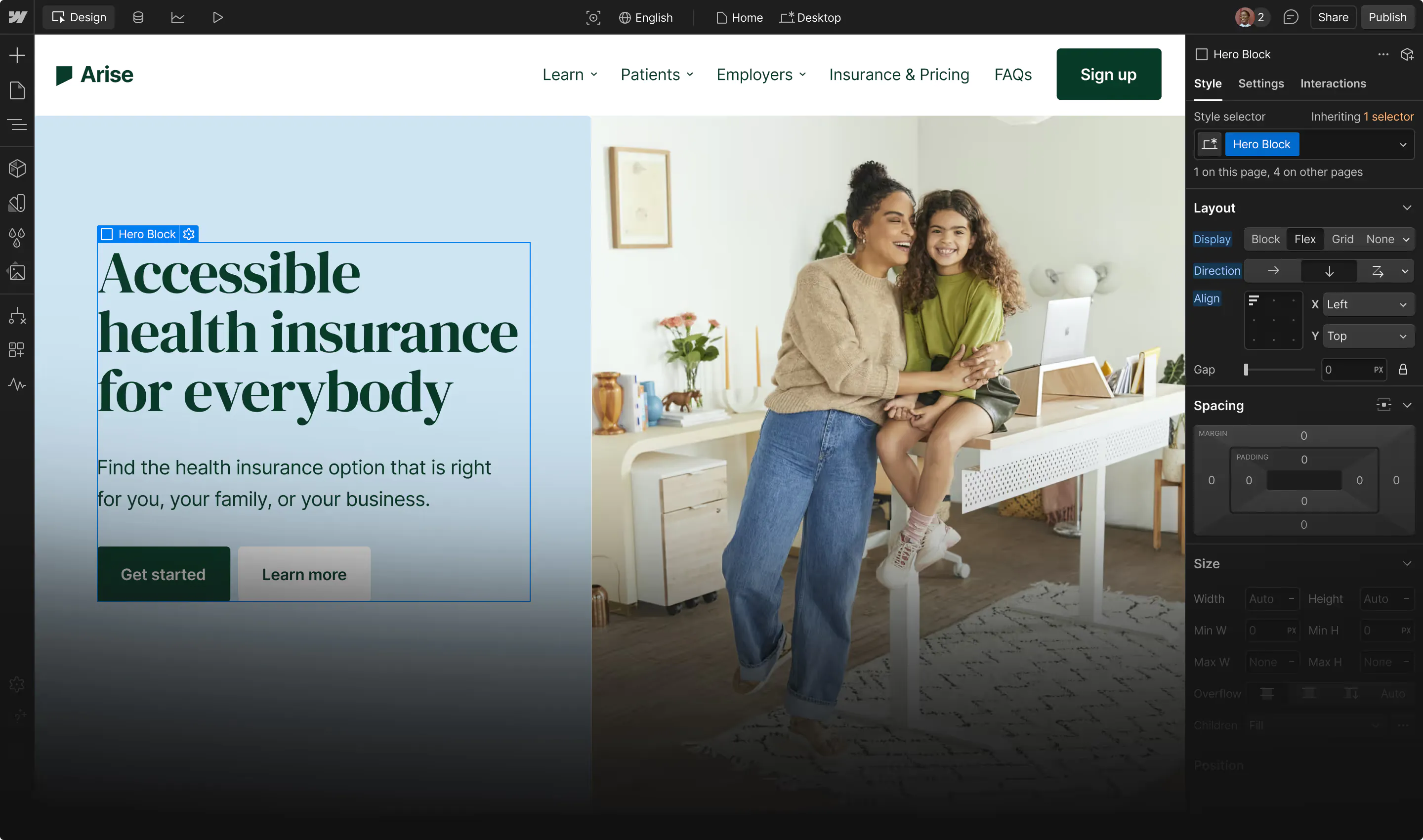

- The Platforms: the distributors, marketplaces, and tooling providers (GitHub, Hugging Face, Cursor, Apple, Webflow) whose policies and defaults shape what everyone else can build, ship, and market.

- The Engineering Orgs: in-house engineering teams at companies of all sizes, rethinking how they operate and embed AI into their products and employee workflows. Not just at tech companies, but healthcare providers, grocery chains, oil refiners, and beyond.

- The Independent Developers: these are power users who also build new AI applications or bridge existing solutions. They can be open-source developers, freelancers, or third-party developers creating apps within ecosystems such as Apple App Store or Webflow marketplace.

- The Citizen Developers: these are non-engineers (PMs, designers, marketers, analysts) who previously had little or no coding ability but can now generate working code and ship applications.

- The Regulators: these are governments, standards bodies, and sector-specific oversight entities shaping how AI can be built, deployed, and audited. Their decisions (EU AI Act, US executive orders, sector rules) increasingly define the guardrails everyone else operates within.

- The Adversaries: threat actors ranging from individuals to hacktivist groups to nation states. As frontier AI models gain serious offensive capabilities, the gap between the attack and defense capabilities is widening fast.

There is barely a B2B or B2C solution untouched by AI, which means literally everyone is a user of AI-generated code. To keep this post focused, we'll set aside the Foundation and Distribution layers and zoom in on the Building layer: the Engineering Orgs, independent developers, and citizen developers actually generating, shipping, and maintaining the code. The hidden costs concentrate here, and so do the levers to do something about them.

Before we jump into these hidden costs, let's take a sneak peek at the AI-generated code benefits.

Shared benefits across the building layer

AI has enabled builders to develop and ship with velocity never seen before. New API endpoints are being developed, tested, and shipped in 30 mins to a few hours while bug fixes and prototypes are worked on with short flight delays. Internal tools and automation are also being developed faster for productivity boosts across the entire organization. This is letting leaner teams or solo entrepreneurs increase their capacity without additional headcount.

Another core benefit is the democratization of development. While engineers are working on complex features, citizen developers are able to build prototypes or fix paper cuts in the product.

The users of AI enabled products are also able to move faster and from the comfort of their mobile devices. The following LinkedIn post was shared by a Webflow customer:

“Went to the gym after my shift was over. Laptop was closed. I was already away. A teammate urgently needed a full CMS collection export as a CSV. Hundreds of items, all fields included. I opened Claude on my phone. Described what I needed. Claude connected to the CMS through the MCP, pulled everything in paginated batches, mapped every field correctly, and handed back a clean structured CSV ready to share. Webflow MCP + Claude is one of the best bridges I’ve used in a production workflow. Every item, every field, zero data loss. The tools are ready. Most people just haven’t connected them”

Another solid benefit which is often less talked about is AI augmented learning, reviewing, and testing. AI assistants are now integrated across collaboration and documentation platforms, code hosting platforms, and the internet broadly. This reduces the barrier to learning unfamiliar technologies and understanding existing code and architecture a lot easier and time efficient. The builders often spend time planning their work with an AI assistant before the actual execution.

Unlike humans, AI does not tire out or need sleep and can reuse best practices for AI development and reviews to keep things consistent and pattern-aware. For a team of junior developers, AI is able to raise the floor by catching obvious mistakes early.

The benefits above are immense and a reason why AI is so widely adopted. However, some of these benefits are often front-loaded and it takes time for us to see the hidden cost in the long run. These costs often land further away from the wins and accolades.

Cleanup costs across the building layer

The Engineering orgs

Engineering organizations have been the biggest beneficiaries of AI augmentation, but they are also the ones that accumulate the largest cleanup cost in the long run.

Humans are still required to be in loop for high risk changes. The burden to review most of the high risk code written within an organization falls upon senior engineers who have contextual understanding.

Engineers who lean heavily on AI, especially those early in their career are prone to erosion of their software engineer skills. They may also find it hard to move to the next level in the career ladder if their thoughts are not their own.

Another huge hidden cost for AI-generated code is quality debt. In the quest to move fast and with AI in charge of low risk work or reviews, the code is prone to duplication and subtle logic flaws that can be exploited later. It also results in weak contextual understanding of the AI-augmented work in the long run. Incidents could also run longer with lack of ownership and understanding of the impacted surface area.

Engineering orgs can also be hit with availability issues with AI vendor concentration. If a heavily relied on AI coding vendor has a downtime, the engineering productivity drops. If the product AI integration vendor is down, the customers feel the pain. And if the product relies completely on AI without a manual workflow, the AI vendor downtime is your downtime.

AI productivity gains do not come for free. There is a large operating cost to AI-augmented development and most of the companies still do not understand AI budgeting. Higher token burn per developer is being glorified and associated with higher productivity, which can lead to wasteful spends.

And last but not least, the security cost which deserves its own section.

Overall Risk level: High but distributed

The independent developers

Independent developers (freelancers, OSS maintainers, third party app developers) are able to see significant gains with AI adoption but it comes with a risk to their personal brand. The larger volume of code makes it harder to review with no peers to review or clean up the code. There is no legal team preventing copyright violations in your work or from your work. Unintended mistakes or bad reviews can get one suspended from a freelancer platform or the developer apps kicked out from an ecosystem. One vulnerable plugin shipped to thousands of customers, one license violation in a freelance deliverable, or one buggy release on the App Store can tarnish a developer's standing in that ecosystem.

Open source maintainers face a particularly cruel asymmetry: it costs a contributor five minutes to generate a low-quality AI pull request, and hours for the maintainer to verify and reject it. The curl project ended its bug bounty program in January 2026 after this asymmetry became unsustainable, and they were not the last project to do so.

Overall Risk level: High and personal

The citizen developers

This is the newest archetype and includes PMs, designers, marketers, and analysts. The citizen developers can now prototype and showcase their ideas instead of asking someone to build it for them. They can also fix minor issues in the code that are often lower priority but improve the customer quality of life. These developers can now also build internal tools which in the past required justification and prioritization of developer resources.

However, the code from citizen developers often carries quality issues. While the code solves the problem, it may contain code duplication, no tests, no error checking or logging, and has no security considerations. If their work touches high risk areas such as authentication or PII data, an engineering review will help fix these issues and also help them learn the tricks of the trade. Lighter and low risk changes may go straight to production. While bad code from citizen developers is less likely to bring a company down, a high concentration of such changes can reduce code quality in the long run.

When citizen developers contribute code to production, they are usually focused on solving a specific problem rather than thinking about long-term maintainability or incident response. If something breaks later, the original author may not have the depth to debug it, and fixes typically fall on the engineering org to test and ship, adding to their workload.

Overall Risk: Medium but can aggregate fast

The ecosystem problem

We just discussed different archetypes and the hidden cost within their own surface. However, there is a second-order effect when independent developers build for an ecosystem or platform owned by larger companies. This includes not just Apple and Google App stores, but marketplace ecosystems from the likes of Webflow, Shopify, and Github. The ecosystem owners have a shared responsibility for AI-generated code written by individual developers.

When customers install an app and something goes wrong, they blame the platform, not the developers. This is because the marketplace reviewed and allowed the app to exist within their ecosystem. Every bad app that slips through the cracks, reduces customer confidence in the ecosystem as a whole.

With AI, independent developers are now shipping their creations faster, resulting in more submissions and reviews for the ecosystem owners. This includes a high volume of submissions with low-quality and insecure code. In the past, if we were able to manually review all apps, it is now not possible with the AI-augmented submission rate. Emerging ecosystems now are investing more in automated reviews, security guidelines, and developer education.

In addition to new app submissions, approved apps are now evolving with the help of AI. Developers are submitting updated app versions with increased capabilities but with similar problems we discussed above: needing higher permissions, insecure code, or license contamination. Ecosystem owners now have to deal with this problem without burning their social contract with the developer community.

Github being both an enterprise solution plus a community code hosting platform is facing infrastructure and resilience challenges with the sheer volume of AI-generated code generated through its AI product and hosted on its platform. This points to larger ecosystems grappling with scaling issues and increased operating costs.

Overall Risk: High but quietly

The security cleanup bill

More code, more bugs

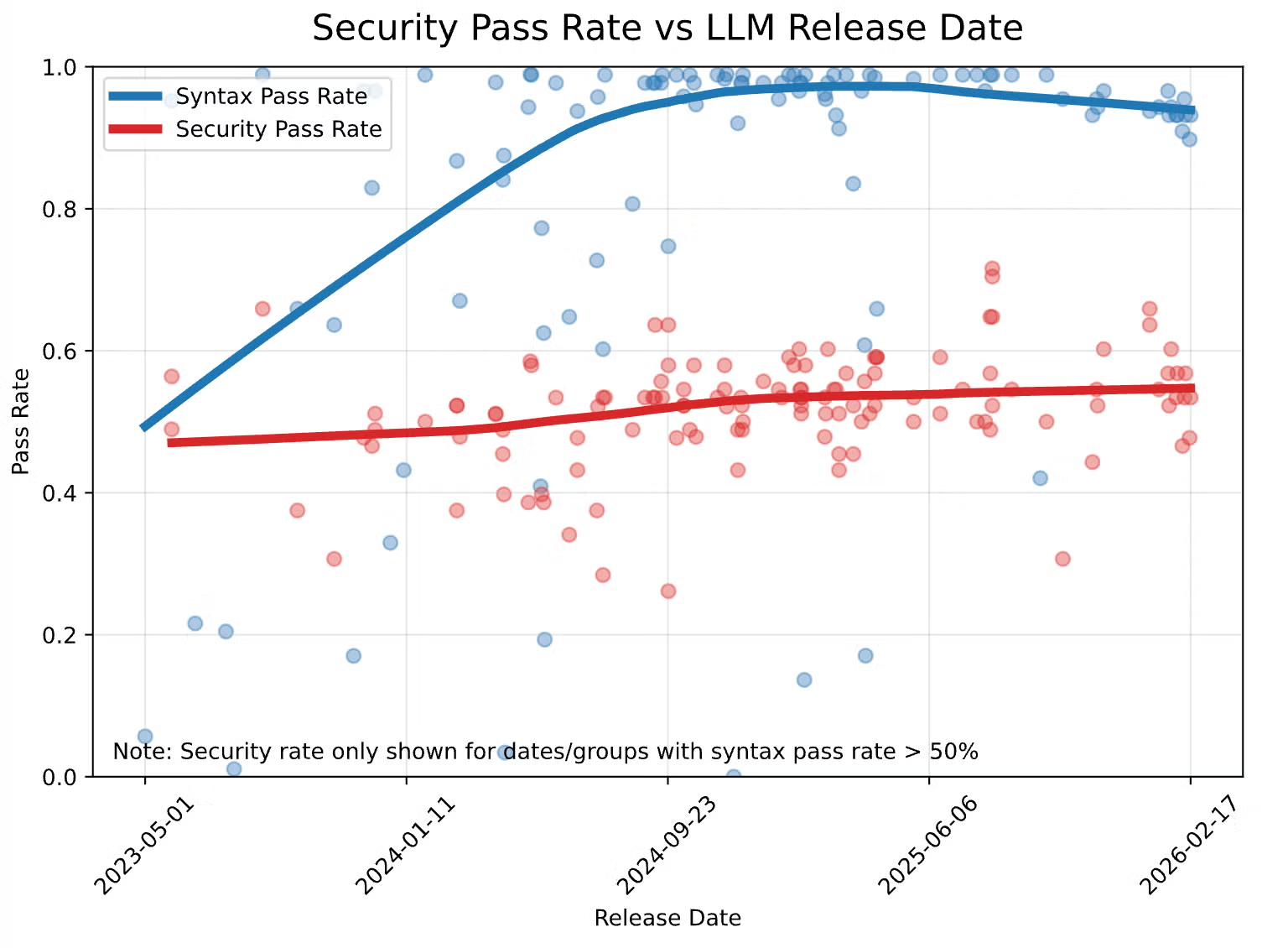

AI models have evolved over the years and they are great at syntactic and semantic correctness. However, when no security guidelines are provided, the security benchmarks have seen sluggish improvements.

The trend published by Veracode is concerning given that more and more code written is now AI-generated with OpenAI claiming that the percentage has gone up to 80%. The latest AI models still write code that has low security pass rate for serious vulnerabilities such as Cross Site Scripting and Log Injection attacks. The models also score low on security with programming languages like Java.

AI hallucinations for software dependencies have seemingly improved, but based on research, AI-written code can still invent package names or misspell it, an opportunity that typosquat attackers use for supply chain attacks.

The patch window has closed

While AI models are busy writing insecure code, the offensive capabilities within AI models have seen a dramatic jump. The barrier to vulnerability research has gone down and AI models reason with the capabilities of top security researchers, if not surpass them. In the past two years alone, the time from a vulnerability to exist in a system to its exploitation has gone down from months to days, and in many cases exploitation begins before a patch even ships.

Anthropic recently collaborated with the most critical software providers in the world under Project Glasswing and shared their unreleased model Claude Mythos. Mythos found 271 vulnerabilities in Firefox alone, including issues that had survived decades of human security review.

While Mythos is a starting point, open source is catching up fast. Hadrian’s research team has cataloged 70 open source AI pentest tools, up from 5 in April 2023. These tools can work relentlessly and in parallel with each other to find vulnerabilities in every software and code that exists on the internet.

Defenders’ burnout

With more code, more bugs, and more exploits, security practitioners are facing a serious burnout. While the vulnerability count and the noise has gone up, the security headcount has not. Security practitioners are now spending more time dealing with zero days, more commonly from relentless package supply chain incidents in the recent past. Vercel and Mercor are some of the latest victims of these security incident trends. Vercel was breached through a compromised AI tool's OAuth token, and Mercor lost roughly four terabytes of data through the LiteLLM open-source AI gateway, exposing training methodologies for OpenAI, Anthropic, and Meta in the process. Both incidents trace back to the same root: AI tooling has become the new supply chain attack surface, and security practitioners are racing to reduce the attacker-to-defender capacity gap.

Cloud Security Alliance (CSA) recently published a paper urging security leaders to build a Mythos-ready security program and prepare for the burnout with the volume of vulnerability disclosures expected to exceed anything we have experienced before. They advise security teams to increase capacity and adopt agentic workflows for security assessments and incidents.

Along with the security incidents, the bug bounty landscape has changed with script kiddies using AI to find and report vulnerabilities. Public Bug bounty programs now see more AI slop than serious reports. The burnout from triage (even with AI) has been serious enough for curl and HackerOne sponsored Internet Bug Bounty programs to be suspended.

FIRST, a leading security non-profit, recently released its prediction for 2026 to surpass 50,000 CVEs for the first time. Their guidance to organizations is to scale their security operations, but most can’t keep up.

NIST itself is buckling. In April 2026, the agency announced it would stop enriching most CVEs in the National Vulnerability Database, citing a 263% surge in submissions between 2020 and 2025. The institution that anchors the world's vulnerability metadata is publicly throwing its hands up. This is indicative of future trouble for other similar vulnerability data ecosystems.

What can we do about it: Reducing the cleanup Cost

The cleanup cost is real, and there is no silver bullet to fix it. The teams and ecosystems that are managing this share a few common patterns, and the patterns differ by where the cost lands. Here is a prioritized view of what to do about the risk category that hurt the most.

Where this leaves us

AI-augmented development is a generational shift on the scale of the industrial revolution. Just as machines reshaped what humans built and how they built it, AI is reshaping how software gets created and who can create it. The barrier to building is low, innovation is at its peak, and entire categories of work are being redefined in months instead of decades.

The hidden costs are also real, and they tend to land far from where the velocity wins were booked. We discussed reviewer fatigue inside engineering orgs, personal reputation risk for independent developers, quality issues that surface years after shipping, ecosystem-wide trust damage when something goes wrong, and a security landscape where attackers move at machine speed while defenders are still operating at human speed. The asymmetry between speed of creation and speed of cleanup is what defines the cost.

The teams and ecosystems that win with AI-generated code over the long run aren't the ones moving fastest. They're the ones that built a method behind the madness. The winners are the ones already accounting for the cleanup strategy from day one. AI will keep stretching the boundaries of what we can imagine. The question is whether the practices around it advance fast enough to keep up.